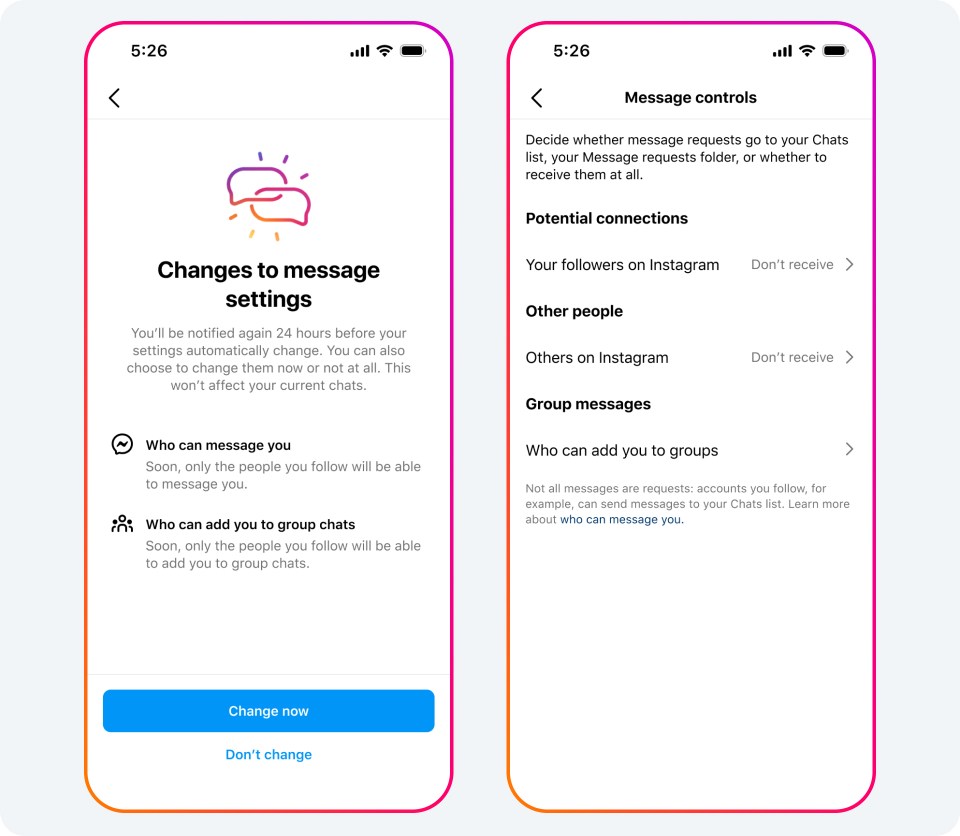

In 2021, Meta restricted adults on Instagram from messaging with users under 18 who do not follow them. It is now expanding this rule to protect underage users from unwanted contact.

Depending on the country, users under the age of 16 or 18 will no longer receive DMs from anyone they do not follow by default (even if they are sent by other teenagers). This new safety measure applies to both Instagram and Messenger.

- When OpenAI couldn’t stop “virtual love robots”, it removed the search (of course, users got over it!)

- Chinese company succeeds in vertical landing 11 years after SpaceX

Instagram and Facebook Messenger in a nutshell;

- Aiming to better protect minors from unwanted online communication,

- Putting more restrictions on who can send them messages,

- Changes are coming that increase parental control over their child’s security settings.

- Of course, this is not 100 percent foolproof, as it is based on the user’s self-reported age and Meta’s technology for estimating people’s age.

“To help protect teens from unwanted contact on Instagram, we’re restricting adults over the age of 19 from messaging teens who don’t follow them,” Meta said in a statement. We’re taking an additional step by turning off by default the ability to receive DMs from people they don’t follow and aren’t connected to on Instagram. Under this new setting, underage users can only receive messages from users they already follow and are connected to.”

Parental oversight tools on Instagram are also being expanded

Parental oversight tools will give parents more control over their child’s security and privacy settings on Instagram. When their child makes a change to their security and privacy settings, the parent will be asked to approve or reject it.

Meta also says it is developing a new feature to protect users from receiving unwanted or inappropriate images. There is no launch date yet, but the company says the feature will be used in encrypted chats and more information will be shared later this year.

Meta’s move comes in the wake of lawsuits and complaints centered on its underage user base. For example, a lawsuit filed by 33 states accuses the company of “targeting children under the age of 13 and continuing to collect their data despite knowing their age.

A recent Wall Street Journal article reported that accounts following young influencers were being served ‘sexually explicit images of children as well as sexually explicit adult videos’. In December 2023, the state of New Mexico filed another lawsuit against Meta, claiming that Facebook and Instagram algorithms recommend sexual content to minors. As we reported recently, Meta employees estimate that 100,000 child users are harassed on Facebook and Instagram every day.

Compiled from Meta press release.